Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this…)

so like a fool I decided to search the web. specifically for which network protocol Lisp REPLs use these days (is it nREPL? or is that just a clojure thing with ambitions?)

and the first extremely SEOed result on ddg was this bizarre blend of an obscure research lisp from 2012 and LLM articles about how Lisp is used in mental health:

Numerous applications and tools are being developed to support mental health and wellness. Among the varied programming languages at the forefront, Lisp stands out due to its unique capabilities in cognitive modeling and behavior analysis.

so I know exactly what this is, but why is this? what even is the game here?

Found on HN:

[Working with AI is] An Overwhelmingly Negative and Demoralizing Force

25 upvotes, no comments yet…

It’s still on the front page, almost 300 comments. Haven’t time to review them all but doesn’t look like the promptfondlers have the upper hand

Has your friend talked with current bio research students? It’s very common to hear that people are having success writing Python/R/Matlab/bash scripts using these tools when they otherwise wouldn’t have been able to.

Possibly this is just among the smallish group of students I know at MIT, but I would be surprised to hear that a biomedical researcher has no use for them.

ahahahahaaha

@froztbyte @gerikson if your code was written by an LLM your results are indistinguishable from Wrong

I have strong feelings on research students who are not able to learn how to write bash and python etc scripts.

“Imagine a technology so useless you cannot run doom on it” https://bsky.app/profile/sosowski.bsky.social/post/3lm63a2srgc24

The kokotajlo/scoot thing apparently made it to the new york times.

So this is what that was about:

stubsack post from two months ago

On slightly more relevant news the main post is scoot asking if anyone can put him in contact with someone from a major news publication so he can pitch an op-ed by a notable ex-OpenAI researcher that will be ghost-written by him (meaning siskind) on the subject of how they (the ex researcher) opened a forecast market that predicts ASI by the end of Trump’s term, so be on the lookout for that when it materializes I guess.

when you say “made it”, i think FUCKING ROOSE

Check out the by-line. Big surprise!

missed that at the time, but you’d think scoot would have an easy time finding a contact at a major publication like the NYT…

Spooks as a service

Solid, high-quality sneer from Adactio - the end is a particular highlight:

The worst of the internet is continuously attacking the best of the internet. This is a distributed denial of service attack on the good parts of the World Wide Web.

If you’re using the products powered by these attacks, you’re part of the problem. Don’t pretend it’s cute to ask ChatGPT for something. Don’t pretend it’s somehow being technologically open-minded to continuously search for nails to hit with the latest “AI” hammers.

Utterly rancid linkedin post:

text inside image:

Why can planes “fly” but AI cannot “think”?

An airplane does not flap its wings. And an autopilot is not the same as a pilot. Still, everybody is ok with saying that a plane “flies” and an autopilot “pilots” a plane.

This is the difference between the same system and a system that performs the same function.

When it comes to flight, we focus on function, not mechanism. A plane achieves the same outcome as birds (staying airborne) through entirely different means, yet we comfortably use the word “fly” for both.

With Generative AI, something strange happens. We insist that only biological brains can “think” or “understand” language. In contrast to planes, we focus on the system, not the function. When AI strings together words (which it does, among other things), we try to create new terms to avoid admitting similarity of function.

When we use a verb to describe an AI function that resembles human cognition, we are immediately accused of “anthropomorphizing.” In some way, popular opinion dictates that no system other than the human brain can think.

I wonder: why?

Dijkstra did it first, but it is very ai-booster to steal work without credit or understanding, I guess.

The question of whether Machines Can Think… is about as relevant as the question of whether Submarines Can Swim.

Yes the 2 rs in strawberry machine thinks. In the same way that an airplane flies. /s

I can use bad analogies also!

- If airplanes can fly, why can’t they fly to the moon? It is a straightforward extension of existing flight technology, and plotting airplane max altitude from 1900-1920 shows exponential improvement in max altitude. People who are denying moon-plane potential just aren’t looking at the hard quantitative numbers in the industry. In fact, with no atmosphere in the way, past a certain threshold airplanes should be able to get higher and higher and faster and faster without anything to slow them down.

I think Eliezer might have started the bad airplane analogies… let me see if I can find a link… and I found an analogy from the same author as the 2027 fanfic forecast: https://www.lesswrong.com/posts/HhWhaSzQr6xmBki8F/birds-brains-planes-and-ai-against-appeals-to-the-complexity

Eliezer used a tortured metaphor about rockets, so I still blame him for the tortured airplane metaphor: https://www.lesswrong.com/posts/Gg9a4y8reWKtLe3Tn/the-rocket-alignment-problem

JFC I click on the rocket alignment link, it’s a yud dialogue between “alfonso” and “beth”. I am not dexy’ed up enough to read this shit.

of course MBA came up with this

an airplane doesn’t flap its wings

You ever see a random shitpost video, like the backgroud music, look it up and realize you already have the vinyl record? That just happened to me.

additional layer is that according to comments first track is also used in chinese nature documentaries

And as the same channel demonstrates, there are ways to fly without any wings at all

spoiler

…as well as with them!

Man, it’s really true what they say: the West can’t meme.

This is too fucking dumb to even be called sophistry.

slophistry

Its almost like flapping part isn’t a requirement for something to fly.

So in the past week or so a lot of pedestrian crossings in Silicon Valley were “hacked” (probably never changed the default password lol) to make them talk like tech figures.

Here are a few. Note that these voices are most likely AI generated.

- A crosswalk with the voice of Elon Musk

- A crosswalk with the voice of Zuck

- Elon Musk crosswalk just wants to be friends (second video) also Zuck crosswalk is proud of his work (third video).

I didn’t get to hear any of them in person, however the crosswalk near my place has recently stopped saying “change password” constantly, which I’m happy about.

New YouTube video I ran across: The Art Of Poison-Pilling Music Files

I went into this with negative expectations; I recall being offended in high school that The Flashbulb was artificially sped up, unlike my heroes of neoclassical guitar and progressive-rock keyboards, and I’ve felt that their recent thoughts on newer music-making technology have been hypocritical. That said, this was a great video and I’m glad you shared it.

Ears and eyes are different. We deconvolve visual data in the brain, but our ears actually perform a Fourier decomposition with physical hardware. As a result, psychoacoustics is a real and non-trivial science, used e.g. in MP3, which limits what an adversary can do to frustrate classification or learning, because the result still has to sound like music in order to get any playtime among humans. Meanwhile I’m always worried that these adversarial groups are going to accidentally propagate something like McCollough stripes, a genuine cognitohazard that causes edges to become color-coded in the visual cortex for (up to) months after a few minutes of exposure; it’s a kind of possible harm that fundamentally defies automatic classification by definition.

HarmonyCloak seems like a fairly boring adversarial tool for protecting the music industry from the music industry. Their code is incomplete and likely never going to get properly published; again we’re seeing an industry-capture research group taking and not giving back to the Free Software community. I think all of the demos shown here are genuine, but he fully admits that this is a compute-intensive process which I estimate is going to slide back out of affordability by the end of 2026. This is going to stop being effective as soon as we get back into AI winter, but I’m not going to cry for Nashville.

I really like the two attacks shown near the end, starting around 22:00. The first attack, if genuinely not audible to humans, is likely a Mosquito-style frequency that is above hearing range and physically vibrates the components of the microphone. Hofstadter and the Tortoise would be proud, although I’m concerned about the potential long-term effects on humans. The second attack is again adversarial but specific to models on home-assistant devices which are trained to ignore some loud sounds; I can’t tell spectrographically whether that’s also done above hearing range or not. I’m reluctant to call for attacks on home assistants, but they’re great targets.

Fundamentally this is a video that doesn’t want to talk about how musicians actually rip each other off. The “tones and rhythms” that he keeps showing with nice visualizations have been machine-learnable for decades, ranging from beat-finders to frequency-analyzers to chord-spellers to track-isolators built into our music editors. He doubles down on copyright despite building businesses that profit from Free Software. And, most gratingly, he talks about the Pareto principle while ignoring that the typical musician is never able to make a career out of their art.

which I estimate is going to slide back out of affordability by the end of 2026.

You don’t think the coming crash is going to drive compute costs down? I think the VC money for training runs drying up could drive down costs substantially… but maybe the crash hits other aspects of the supply chain and cost of GPUs and compute goes back up.

He doubles down on copyright despite building businesses that profit from Free Software. And, most gratingly, he talks about the Pareto principle while ignoring that the typical musician is never able to make a career out of their art.

Yeah this shit grates so much. Copyright is so often a tool of capital to extract rent from other people’s labor.

It’s the cost of the electricity, not the cost of the GPU!

Empirically, we might estimate that a single training-capable GPU can pull nearly 1 kilowatt; an H100 GPU board is rated for 700W on its own in terms of temperature dissipation and the board pulls more than that when memory is active. I happen to live in the Pacific Northwest near lots of wind, rivers, and solar power, so electricity is barely 18 cents/kilowatt-hour and I’d say that it costs at least a dollar to run such a GPU (at full load) for 6hrs. Also, I estimate that the GPU market is currently offering a 50% discount on average for refurbished/like-new GPUs with about 5yrs of service, and the H100 is about $25k new, so they might depreciate at around $2500/yr. Finally, I picked the H100 because it’s around the peak of efficiency for this particular AI season; local inference is going to be more expensive when we do apples-to-apples units like tokens/watt.

In short, with bad napkin arithmetic, an H100 costs at least $4/day to operate while depreciating only $6.85/day or so; operating costs approach or exceed the depreciation rate. This leads to a hot-potato market where reselling the asset is worth more than operating it. In the limit, assets with no depreciation relative to opex are treated like securities, and we’re already seeing multiple groups squatting like dragons upon piles of nVidia products while the cost of renting cloudy H100s has jumped from like $2/hr to $9/hr over the past year. VCs are withdrawing, yes, and they’re no longer paying the power bills.

That is substantially worse than I realized. So possibly people could sit on GPUs for years after the bubble pops instead of selling them or using them? (Particularly if the crash means NVIDIA decides to slow how fast the push the bleeding edge on GPU specs so newer ones don’t as radically outperform older ones?)

So possibly people could sit on GPUs for years after the bubble pops instead of selling them or using them?

I mean, who are you going to sell them to? the other bagholders are going to be just as fucked, and it’s not like there’s an otherwise massive market for these things

Ultra ultra high end gaming? Okay, looking at the link, 94 GB of GPU memory is probably excessive even for eccentrics cranking the graphics settings all the way up. Hobbyists with way too much money trying to screw around with open weight models even after the bubble bursts? Which would presume LLMs or something similar continue to capture hobbyists’ interests and that smaller models can’t satisfy their interests. Crypto mining with algorithms compatible with GPUs? And cyrpto is its own scam ecosystem, but one that seems to refuse to die permanently.

I think the ultra high end gaming is the closest to a workable market, and even that would require a substantial discount.

in the same vein, I did some (somewhat wildly) speculative analysis around this a while back too

didn’t really try to model “actual workload” (as in physical, vs the “rented compute time” aspect), and therein lies an important distinction: actually owning the GPU puts you at a constant minimum burn rate

and as corbin points out wrt power, these are also specialised formfactor devices. and they’re going to be getting run at close to max util their entire operated lifespan (because of silicon shortage). so even if any do get sold… long mileage

tesla: “your car is not your car and we have deep, varied firmware and systems access to it on a permanent basis. we can see you and control you at all times. piss us off and we’ll turn off the car that we own.”

also tesla: “sorry no you can’t return it”

I wonder how often Musk fires employees who explain to him that, no using tesla cars for distributed computing is a bad idea and we should stop working on this.

Some more low effort image posting. This zine was in Connolly Books for free. I’m not sure who the author is, but I thought the text was spot on and the illustrations were great. Sorry for no captions/transcriptions

Any idea why the flag reassembles the Swiss one (the proportions are wrong though)?

It’s the network state flag. Which I presume is based on the Swiss flag, because they are horny for the idea of a small, rich, well-connected country that profits from mass murder and exploitation.

Oh, I only knew of some of it, thanks

Tired: Propaganda of the deed

Wired: Propaganda of the Sneer

I don’t think whoever wrote this zine goes here, but they should!

Otoh having different groups of the sneer, sneercells (no wait that name needs work) if you will. Can also be useful, esp as authoritarianism etc increases. (Not that I think govs would go after us, apart from an infiltration risk).

E: Hell, I myself am prob a risk factor. First the Dutch secret service should have file on me (else they are not doing their job, I was active in student activism, and did STEM (which also had an active (and targeted for infiltration, we know because counterhacks) hacker group) education (a known terrorism risk increaser), before my faulty brain wiring caused me to drop off the map a bit, and I know several of these groups have been targeted for infiltration recently, and long ago). I also have had just too many official twitter police accounts follow me and then unfollow me without liking a single post for it to be just a coincidence (three times being enemy action). Of course, I’m now old and passive, so if they still think I’m a risk they certainly are not doing their job (I could be a source of information however). But this is enough for me to consider that my real life identity is known enough to be a risk. And this is just about the Dutch police/secret service, there is also the risk of TracingWoodGrains like people trying to get all up in your (social) networks to try and get validation from themotte. And I have read enough stories about cybercriminals that you need to think about this stuff long before it is actually needed, also I’m paranoid.

Samizdat of the Sneer?

One more pic:

Subtle dig on Nick Land not being relevant anymore considering that drawing is of him 30+ years ago.

What does he look like now? I shudder to think.

BALD

Moldbug really is the one exception here isn’t he?

And the least deserving too!

From according to yt, that was him in 2017. This is the clearest I could (quickly) find, others are just low res webcam things.

Yowza.

Time waits for nobody.

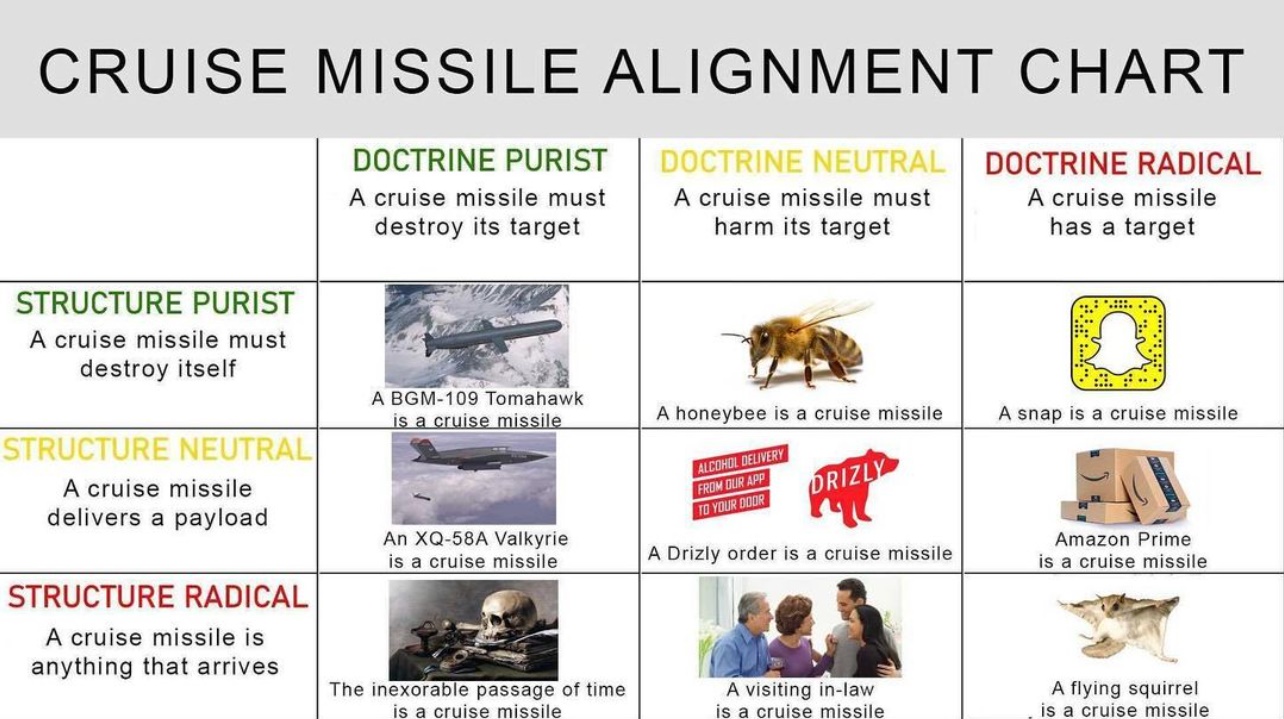

context

Okay, one more pic, the back cover. Can’t say how reliable these sources are.

Shopify going all in on AI, apparently, and the CEO is having a proper born-again moment. Don’t have a source more concrete than this yet:

https://cyberplace.social/@GossiTheDog/114298302252798365

(and transcript: https://infosec.exchange/@barubary/114298367285112648)

It’s a lot like this:

Using AI effectively is now a fundamental expectation of everyone at Shopify. It’s a tool of all trades today, and will only grow in importance. Frankly, I don’t think it’s feasible to opt out of learning the skill of applying AI in your craft; you are welcome to try, but I want to be honest I cannot see this working out today, and definitely not tomorrow. Stagnation is almost certain, and stagnation is slow-motion failure. If you’re not climbing, you’re sliding.

That text is painful to read (I wonder how much of it is slop)… ugh, what is chatgpt doing to the brains of people? (And I’ve had the bad luck of reading some pretty unhinged pro-AI stuff from management at my employer too, although not as bad as this mail from shopify).

Is there a precedent for this hype? For the extent of damage that it will cause? Most tech industry hype is a waste of resources, but otherwise mostly harmless. Like that time when everyone believed that XML is the holy grail, that was silly, and although we still have to deal with some unfortunate data formats from those days, it passed. There were worse ones, most notably blockchain was almost catastrophic, but most companies hesitated to go all-in and pursued it more on the side, so when that hype faded, they simply buried their involvement and that was that.

But “AI”… it has such potential to create significant and long term damage to the companies adopting it. The slop code alone might haunt them forever, in ways that even the worst excesses of 90s enterprise java couldn’t. There’s nothing to learn from resulting failure, except “don’t use AI”.

In this case, given shopify’s general behaviour, I won’t be sad at all though if they crash and fail.

I also thought ‘guess LLMs dont work as an editor’.

And blockchains did massive damage, all the ransomware crime would be impossible if the tech world had not jumped into blockchain as much as they did and created and kept maintaining the ecosystem. (It also caused the techbro people who now pivot to AI rise, so it is connected). Note that the damage done by BEC is still greater than ransomware, so not cybersecurity advice.

But I get your point, I think a real example would be facebooks pivot to video. Which destroyed companies.

Yes, that’s true. Indirectly it costs them all dearly with ransomware. Likewise, I think the overall damage that AI will do to society as a whole will be much, much greater than just rotting some tech companies from the inside (most of which I wouldn’t be sad anyway if they went away…).

What I meant is that with blockchain the big tech companies at least didn’t willingly destroy their products, their processes, their decision making etc. I.e. they didn’t put blockchain into absolutely everything, all the way to MS Notepad. What I find staggering about this hype is the depth of the delusion, the willingness to not just experiment with it but really go all-in.

yeah, no I agree that blockchain is a bad example, just think we shouldn’t understate the massive damage that has done. Not just in actually damaged systems but also just in additional cost that now everybody has to worry about this. Same as how AI is not just causing climate change problems by running it, but the scraping as well has increased the cost of running a webserver by 50% in load alone. (which on a global scale is just horrid). And then there is the forcing of it in everything, the burning of the boats.

blockchain targeted libertarian post-goldbug pro-cyberpunk-dystopia fuckheads, llms target management types (you will replace workers with machines!), maybe that’s why

Extreme sent at 4am energy.

:( looked in my old CS dept’s discord, recruitment posts for the “Existential Risk Laboratory” running an intro fellowship for AI Safety.

Looks inside at materials, fkn Bostrom and Kelsey Piper and whole slew of BS about alignment faking. Ofc the founder is an effective altruist getting a graduate degree in public policy.

that’s CFAR cult jargon right?

Not sure! What is CFAR?

Center For Applied Rationality. They hosted “workshops” were people could learn to be more rational. Except there methods weren’t really tested. And pretty culty. And reaching the correct conclusions (on topics such as AI doom) were treated as proof of rationality.

Edit: still host, present tense. I had misremembered some news of some other rationality adjacent institution as them shutting down, nope, they are still going strong, offering regular 4 day

brainwashing sessionsworkshops.

Mesa-optimization? I’m not sure who in the lesswrong sphere coined it… but yeah, it’s one of their “technical” terms that don’t actually have academic publishing behind it, so jargon.

Instrumental convergence… I think Bostrom coined that one?

The AI alignment forum has a claimed origin here is anyone on the article here from CFAR?

Mesa-optimization

Why use the perfectly fine ‘inner optimizer’ mentioned in the references when you can just ask google translate to give you the clunkiest, most pedestrian and also wrong part of speech Greek term to use in place of ‘in’ instead?

Also natural selection is totally like gradient descent brah, even though evolutionary algorithms actually modeled after natural selection used to be their own subcategory of AI before the term just came to mean lying chatbot.

I’m thinking they hired Jar-Jar Binks to the team.

Mesa-optimization… that must be when you rail some crushed-up Adderall XRs, boof some modafinil for good measure, and spend the night making sure your kitchen table surface is perfectly flat with no defects abrasions deviations contusions…

and you wrap it off with some linux 3d graphics lib hacking

Some dark urge found me skim-reading a recent AI doomer blog post. I was startled awake by this most unsettling passage:

My wife wrote a letter to our infant daughter recently. It concluded:

I don’t know that we can offer you a good world, or even one that will be around for all that much longer. But I hope we can offer you a good childhood. […]

Though the theoretical possibility had always been percolating somewhere in the back of my mind, it wasn’t until now that I viscerally realized that P(doomers reproducing) was greater than zero. And with other doomers no less.

Left brooding on this development, I drudged along until-

BAhahaha what the fuck

I can’t. This is beyond parody.Completely lost it here. Nothing could have prepared me for the poorly handwritten wrist tattoo.

Creating space for miracles

Doom feels really likely to me. […] But who knows, perhaps one of my assumptions is wrong. Perhaps there’s some luck better than humanity deserves. If this happens to be the case, I want to be in a position to make use of it.Oh how rational! Willing to entertain the idea that maybe, theoretically, the doomsday prediction could be off by a few days?

I’m not sure that I ever strongly felt that I would die at eighty or so. I had a religious youth and believed in an immortal soul. Even when I came out of that, I quickly believed in the potential of radical transhuman life extension.

This guy thought he was getting clean but he was actually replacing weed with heroin

I really convinced myself that “doomsday cult” was hyperbole but uhh, nope, it’s 107% real.I had a religious youth and believed in an immortal soul. Even when I came out of that, I quickly believed in the potential of radical transhuman life extension.

My dude you’re so, so, sooo close to realising it, you should spontaneously quantum-tunnel into self-awareness any second now

Doom feels really likely to me. […] But who knows, perhaps one of my assumptions is wrong. Perhaps there’s some luck better than humanity deserves. If this happens to be the case, I want to be in a position to make use of it.

This line actually really annoys me, because they are already set up for moving the end date on their doomsday prediction as needed while still maintaining their overall doomerism.

The storm ragea within

Yet I hold F to pay respects

:'( sad one. feel bad for the bebe, being raised by insane people.

Also, man why do I click on these links and read the LWers comments. It’s always insufferable people being like, “woe is us, to be cursed with the forbidden knowledge of AI doom, we are all such deep thinkers, the lay person simply could not understand the danger of ai” like bruv it aint that deep, i think i can summarize it as follows:

hits blunt “bruv, imagine if you were a porkrind, you wouldn’t be able to tell why a person is eating a hotdog, ai will be like we are to a porkchop, and to get more hotdogs humans will find a way to turn the sun into a meat casing, this is the principle of intestinal convergence”

Literally saw another comment where one of them accused the other of being a “super intelligence denier” (i.e., heretic) for suggesting maybe we should wait till the robot swarms coming over the hills before we declare its game over.

Oh that tattoo is regrettable

At the start they state

The disappointment of imminent death is all the more crushing because just a few years ago researchers announced breakthrough discoveries that suggested [existing, adult] humans could have healthspans of thousands of years. To drop the analogy, here I’m talking about my transhumanist beliefs. The laws of physics don’t demand that humans slowly decay and die at eighty. It is within our engineering prowess to defeat death, and until recently I thought we might just do that, and I and my loved ones would live for millennia, becoming post-human superbeings.

This is, frankly, bonkers. I’d rate the following in descending order of probability

- worldwide societal collapse due to climate change

- we develop an AI that will kill us all for unspecified reasons

- we establish viable self-sustaining societies outside the limits of Earth

- we develop techniques that allow everyone to live effectively forever

If the first happens, it removes the material requirements for the latter things to happen. This is an extreme form of “denial of the flesh”, the inability to realize that without food or water no-one will be working on AI or life extension tech.

“Im 99% sure I will die in the next year because of super duper intelligence, but in a world where that doesnt happen i plan to live 1000 years” surely is a forecast. Surprised they don’t break their own necks on the whiplash from this take.

yet I hold

space for it

Rupi Kaur should sue

I don’t know that we can offer you a good world, or even one that will be around for all that much longer. But I hope we can offer you a good childhood. […]

When “The world is gonna end soon so let’s just rawdog from now on” gets real

Teach your children to envy the dead

Apparently including a camera-esque filename in prompts for the latest mid journey release can make it more photorealistic. Unfortunately it also looks like the distinctive AI art style was pretty key to preventing the usual set of AI generated image “tells”. Mirrors, hands, teeth, etc are all very visibly wrong.

Apparently including a camera-esque filename in prompts for the latest mid journey release can make it more photorealistic.

This entire enterprise is just shamanry, we are like two steps away from “throwing a goat into a volcano makes your next prompt more realistic”

I feel like some of the doomers are already setting things up to pivot when their most major recent prophecy (AI 2027) fails:

From here:

(My modal timeline has loss of control of Earth mostly happening in 2028, rather than late 2027, but nitpicking at that scale hardly matters.)

It starts with some rationalist jargon to say the author agrees but one year later…

AI 2027 knows this. Their scenario is unrealistically smooth. If they added a couple weird, impactful events, it would be more realistic in its weirdness, but of course it would be simultaneously less realistic in that those particular events are unlikely to occur. This is why the modal narrative, which is more likely than any other particular story, centers around loss of human control the end of 2027, but the median narrative is probably around 2030 or 2031.

Further walking the timeline back, adding qualifiers and exceptions that the authors of AI 2027 somehow didn’t explain before. Also, the reason AI 2027 didn’t have any mention of Trump blowing up the timeline doing insane shit is because Scott (and maybe some of the other authors, idk) like glazing Trump.

I expect the bottlenecks to pinch harder, and for 4x algorithmic progress to be an overestimate…

No shit, that is what every software engineering blogging about LLMs (even the credulous ones) say, even allowing LLMs get better at raw code writing! Maybe this author is better in touch with reality than most lesswrongers…

…but not by much.

Nope, they still have insane expectations.

Most of my disagreements are quibbles

Then why did you bother writing this? Anyway, I feel like this author has set themselves up to claim credit when it’s December 2027 and none of AI 2027’s predictions are true. They’ll exaggerate their “quibbles” into successful predictions of problems in the AI 2027 timeline, while overlooking the extent to which they agreed.

I’ll give this author +10 bayes points for noticing Trump does unpredictable batshit stuff, and -100 for not realizing the real reason why Scott didn’t include any call out of that in AI 2027.

I’ll give this author +10 bayes points for noticing Trump does unpredictable batshit stuff

+10 bayes points

Has someone on LW already proposed a BayesCoin or have I just figured out how to steal lunch money from all rationalists at once

Without looking it up i can tell you that coin already exists and the value has crashed already

With a name like that and lesswrong to springboard it’s popularity, BayesCoin should be good for at least one cycle of pump and dump/rug-pull.

Do some actual programming work (or at least write a “white paper”) on tying it into a prediction market on the blockchain and you’ve got rationalist catnip, they should be all over it, you could do a few cycles of pumping and dumping before the final rug pull.

it’s a fucking harry potter reference

HP fanfic but house points are crypto and the chocolate frog cards are NFTs tracked on a magical blockchain

Non Fungible Toads

Edit: this is a nonsequitur, but my wife just shared this with me and it is delightful